Feed

Discussion

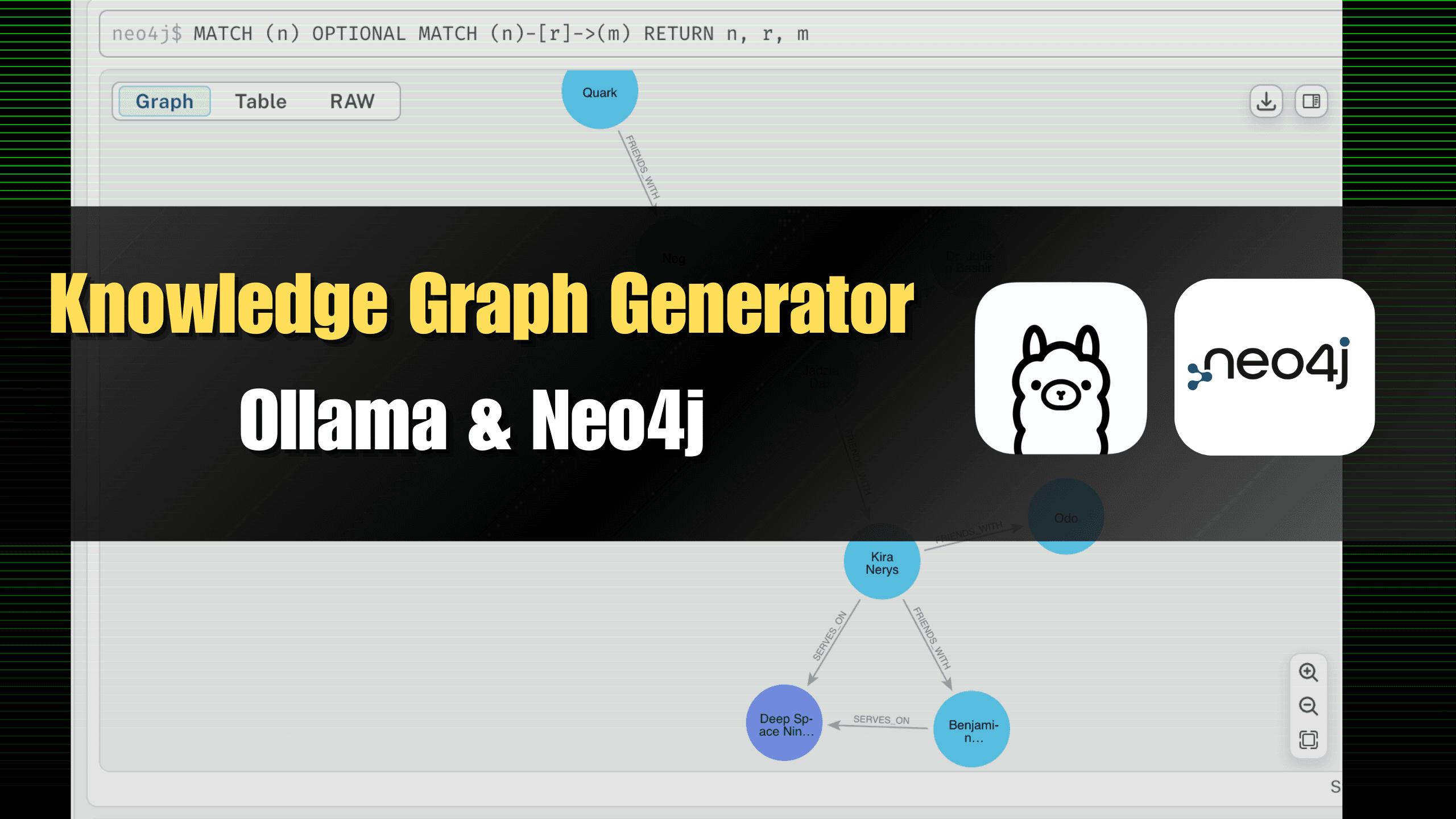

Building a Knowledge Graph Locally with Neo4j & Ollama

Knowledge graphs, also known as semantic networks, are a specialized application of graph databases used to store information about entities (person, location, organization, etc) and their relationships. They allow you to explore your data with an in...

blog.greenflux.us16 min read

#neo4j#graph-database#knowledge-graph#python#python3#huggingface#llm#cypher#macos#obsidian#ollama#ontology

Wily Ktpm

Really cool to see a local setup like this. I’ve been using Neo4j for a project tracking software dependencies and tech stack relationships, and embedding nodes with LLM-generated descriptions made querying for “which services depend on this deprecated library” feel almost like magic.