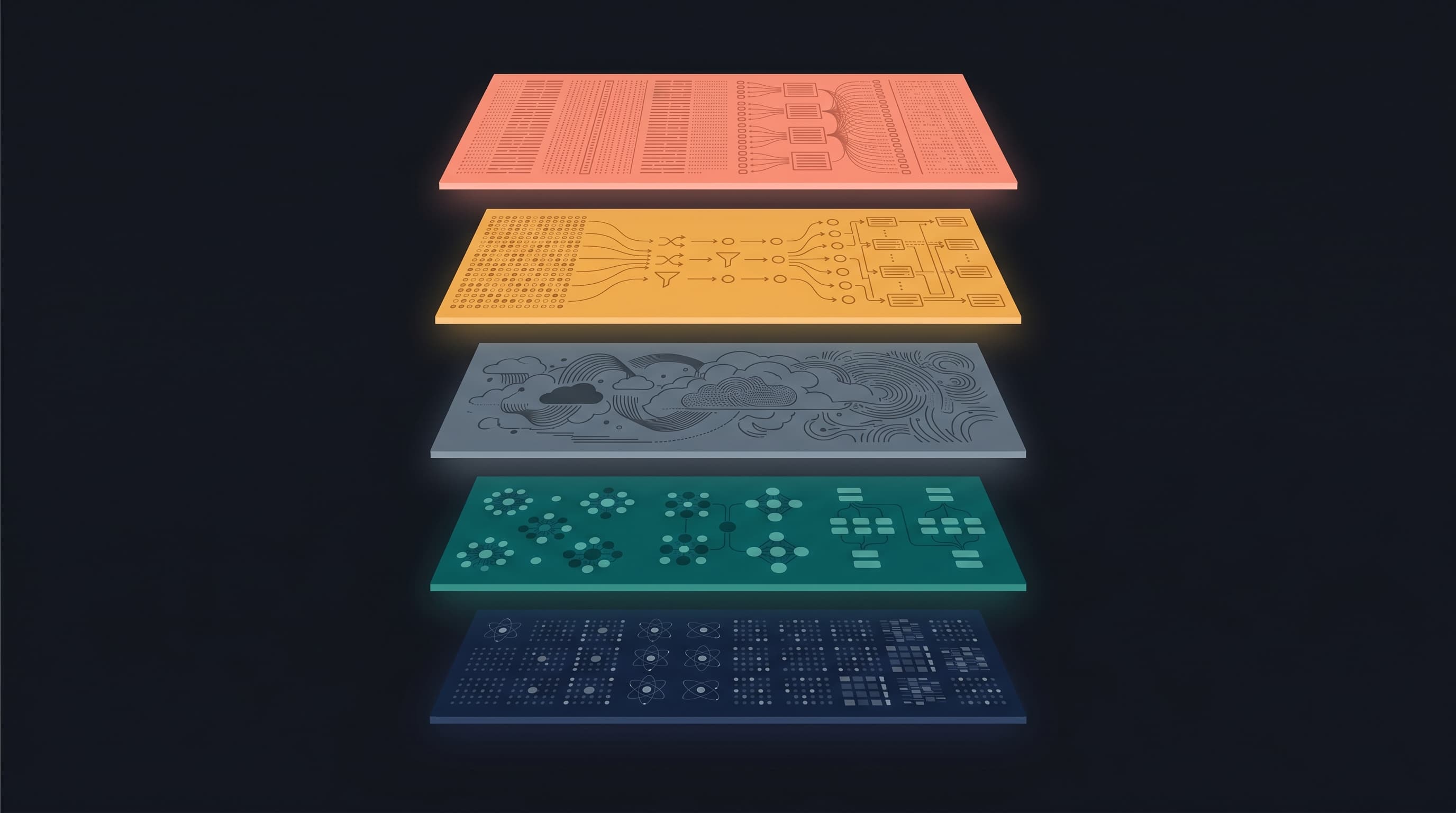

The Building Blocks of an Agent Memory System

Apr 26 · 14 min read · The Building Blocks of an Agent Memory System Most agent "memory" systems retrieve too much. They paste the last N turns into the context window, or they dump the top-K results from a vector search, and they hope the model finds the signal. The model...

Join discussion