Forums

Thread

Nov 13, 2016

How does a computer machine understand 0s and 1s?

As we all know the programs are stored as binary digits. My question is how exactly does the computer understand 0 & 1?

Responses(5)

What exactly is this bit-story?

Computers are digitial. Digital means we build components from "analog" hardware, like transistors, resistors, etc., which work with two states: On and Off. Usually we can do this by employing reversed Z-Diodes, which are not conductive until a certain voltage threshold is reached. That way, you either have 0V or <threshold>+ Volt. The resulting components are called "logical gates". Some examples for gates include "AND", "OR", "NAND", "XOR", etc. With these gates, your computer hardware can be built easily in a way which allows for storing states (e.g. NAND flip-flops) and do logical things, like mathematic computations. One storage-place can save the state of either 0 or 1. This state is called "bit". Bits work in the binary system. Let me translate that for you to our widely used decimal system:

| Binary | Decimal |

|---|---|

| 0 | 0 |

| 1 | 1 |

| 10 | 2 |

| 100 | 4 |

| 101 | 5 |

| 1010 | 10 |

(Taken from one of my previous posts about 64bit)

How your computer handles a bit-stream

In order to do some processing, your computer uses a clock generator with a very high frequency. The frequency is usually one of the selling points of a CPU, like 4GHz. It means the clock generator alternates between logical 0 and 1 ca. 4,000,000,000 times a second. The signal from the clock generator is used to transfer information through your logical gates and in order to give them time to do their thing. Logical gates do not provide instant calculations, because even electromagnetic alterations (important! It's not the electrons which have to travel fast) have to travel the distance and need a certain time to do so.

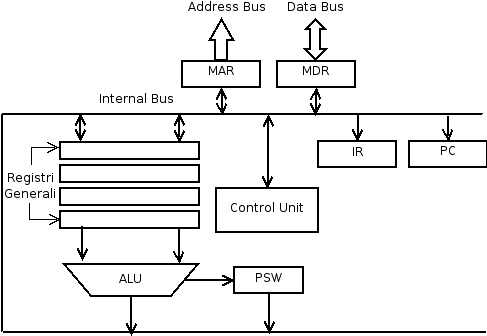

With the help of the logical gates, a computer is able to pump your data from memory into your CPU. Inside the CPU, there usually are one to several ALUs. ALU stands for Arithmetic Logical Unit and describes a circuit (out of logical gates) which is able to understand a certain combination of bits and do calculations on them. One ALU usually has an input of several bits which are filled in sequentially, meaning the bit stream is pumped into the one side of the ALU until the input is "full". Then the ALU is signalled that it should process the input.

Let's take a look at a simple example. The example ALU can work on 8 bit and the input sequence is 11001000. One word is 3 bits long (a "word" is the length of a data entity). The first thing the ALU needs is a command set ("opcodes"), for example ADD (sum of two numbers), DEC (decrease), INC (increment data),... Those commands are evaluated via a simple logic. For the sake of simplicity, let's say the command is encoded in 2 bit (which sums up to 3 commands + NOOP (no operation; do nothing)) which are stored in the first two bits of the input sequence (usually an ALU has a separate opcode input). Let's say the ALU knows all of the mentioned commands above:

| Bitcode | Command |

|---|---|

| 00 | NOOP |

| 01 | ADD |

| 10 | MOV |

| 11 | INC |

Since the first two bits in our sequence are 11, the operation for the computation is "INC". The ALU can then proceed to increment the number which follows. Incrementing only takes one input: the number to increment. So the ALU takes the first word after the command (001) and increments it via mathematical logic (also implemented with logical gates). The result then is 010 which is pushed out of the ALU and then saved in a result memory location.

But how can we do logical things in addition to math?

The thing is that there are a few more commands which allow more logical operations, like jump or jump if equal. Those commands have to be executed by another part of the CPU: a Control Unit. It does the data fetching and executes certain memory related operations, but all in all is quite similar to how the ALU works.

By manipulating data and storing it in well defined places or pushing it to certain busses, the data can be used in other places, for example it can be sent to the PCI controller, then it can be consumed by the graphics card and sent to the monitor in order to display an image. It can be sent to a USB controller which stores it on your USB drive. It all depends on the operations and the content of certain memory locations, which are used by logical gates to decide stuff.

The digital language is made up of just two 'alphabets', 0 and 1 . Computers being a digital entity understands only 0 and 1. But how does it understand a 0 as '0' and 1 as '1'? At the hardware level we have bunch of elements called transistors (modern computers have billions of them and we are soon heading towards an era where they would become obsolete). These transistors are basically switching devices. Turning ON and OFF based on supply of voltage given to its input terminal. If you translate the presence of voltage at the input of the transistor as 1 and absence of voltage as 0 (you can do it other way too). There!! You have the digital language. Can you now imagine billions of those transistors flipping 0's and 1's synchronously in less than a nanosecond interval, just so you could read this answer?? :)

Well, your question is out of the scope of programming at all and even lower then hardware level. This is a question for basic physics and electronics.

Computer doesn't have to understand 1 and 0 because this is already how electornic works, it operates on voltage level already. Imagine a light switch, when it is turned off - there are no light in the room, when you manually press the switch, it is on and there is a light. We, humans, just represent a state of the switch (0 - off, 1 - on) or signal (0 - absence of an electrical signal, 1 - presence of an electrical signal). Low voltage we represent as 0 and high voltage as 1. Read more in this article: Why 1’s and 0’s?

Years ago you should turn switches on/off manually, but now there are transistors. Here is a good short video explaining how tansistor works.

Hello - when you put a CD-ROM into a disc-drive, which I am assuming has data stored on it as 1s and 0s. And the Transistor copies that information to read it. How does it read it? I'm thinking, obviously a laser but how does that laser recognise that it is reading a 0 or a 1. If it acts like a camera, how does the camera know it is recognising a 0 or a 1. I guess, you program it. But how do you program it to read and recognise the information?

Back when computers read things from cards with holes in them, I can understand that because a hole, lets say is 0 and no hole is, lets say 1 (correct me if I'm wrong!) I can understand that because it's a physical action.

Any answers would be greatly appreciated.

A computer doesn't actually "understand" anything. It merely provides you with a way of information flow — input to output. The decisions to transform a given set of inputs to an output (computations) are made using boolean expressions (expressed using specific arrangements of logic gates).

It is up to us (putting it more precisely, the Software we use) to interpret this information based on a given premise. If you're given the binary string

11111111 00000000 11111111(binary representation of the RGB values (255, 0, 255)) with extra information indicating that it represents a color, your software will interpret it as a signal to display the color Magenta.Why do computers use binary, anyway?

but now there are transistors...

Coming to the question at hand, modern computers use tiny devices called Transistors to represent the 1s and 0s.

To understand the principle and the working behind them... @mevrael has pointed you to the right video — How does a transistor work? - Veritasium

Here is a great TED-Ed video — How transistors work? - TED-Ed — with a historical overview of how the early computers handled 1s and 0s, the problems we faced, and how the modern-day computers, using transistors, are absolved of these problems.