Feed

Discussion

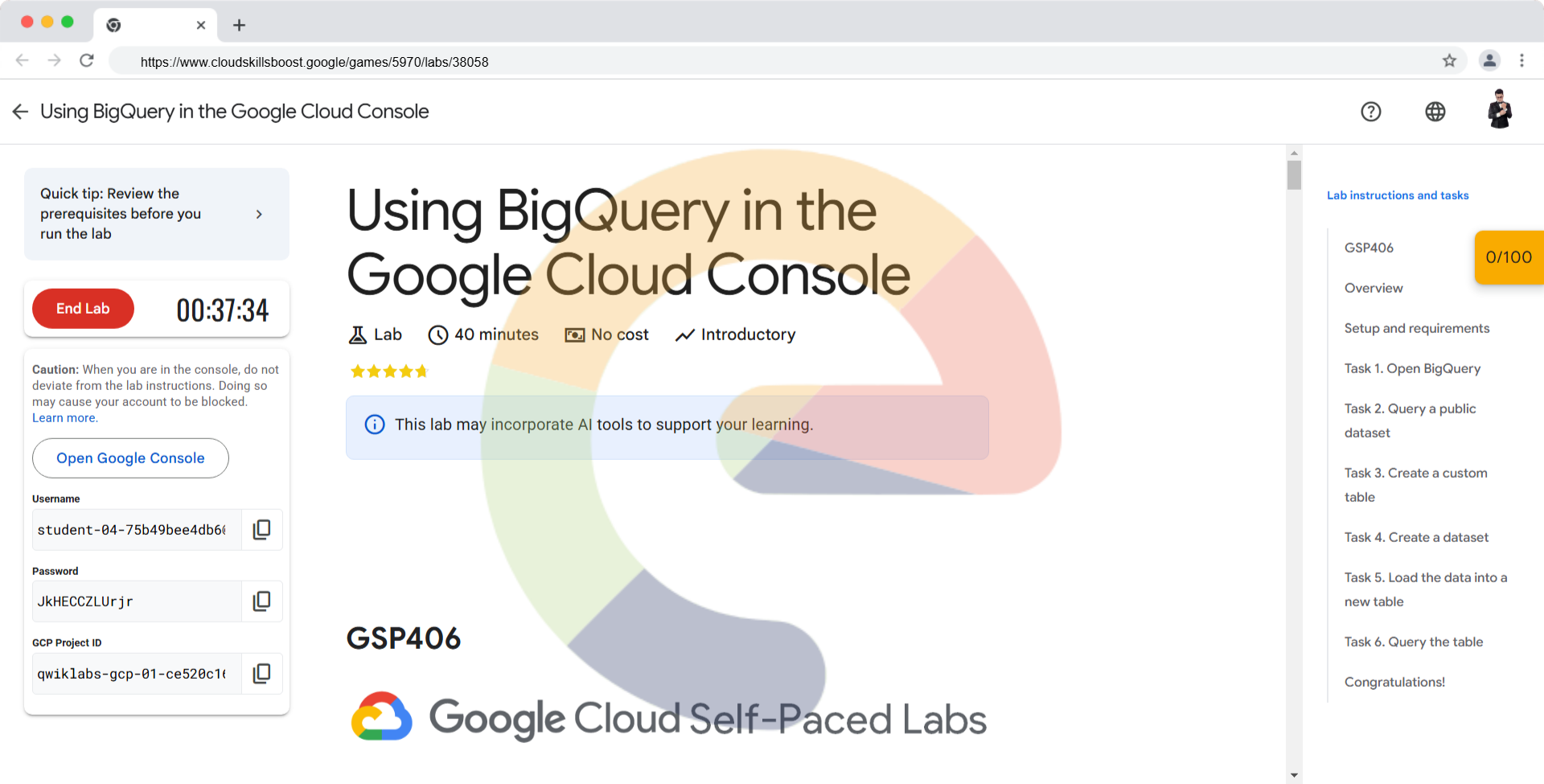

Using BigQuery in the Google Cloud Console - GSP406

Overview Storing and querying massive datasets can be time consuming and expensive without the right hardware and infrastructure. BigQuery is an enterprise data warehouse that solves this problem by enabling super-fast SQL queries using the processin...

eplus.dev8 min read

#using-bigquery-in-the-google-cloud-console-gsp406#using-bigquery-in-the-google-cloud-console#gsp406

Hu xinya

Great post! One tip for new users: always preview your data or use

SELECT * FROM table LIMIT 1000before running full queries, as this helps avoid accidentally processing large amounts of data and incurring unexpected costs.