Feed

Discussion

Sep 8, 2025

How Self-Attention Mechanism Works: The Core of Transformers & LLMs

When we talk about Large Language Models (LLMs) like GPT, BERT, or LLaMA, one phrase always comes up: “Self-Attention”. Introduced in the seminal paper “Attention Is All You Need” (2017), this mechanism revolutionized natural language processing by a...

bittublog.hashnode.dev3 min read

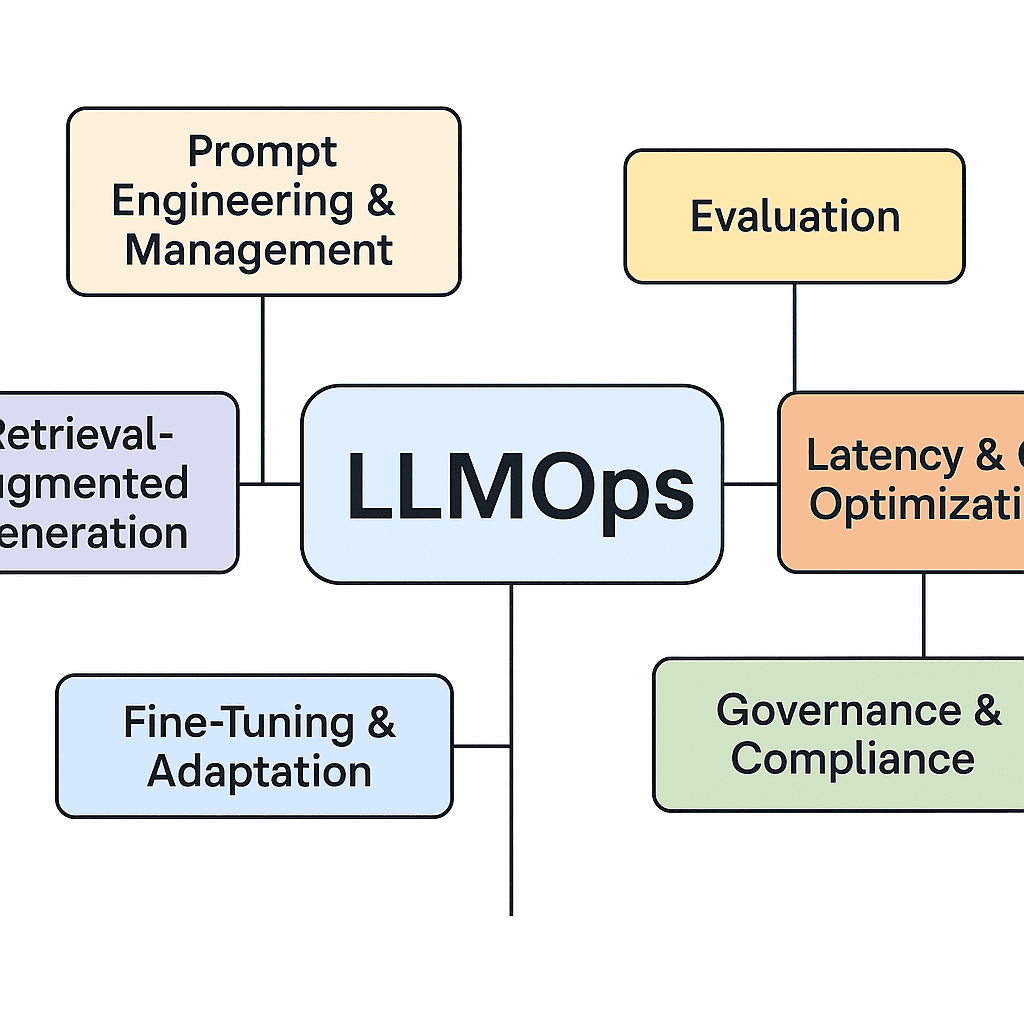

#mlops-machinelearning-aws-amazonsagemaker-modeltraining-modelversioning-modeldeployment-continuousintegration-continuousdeployment-machinelearningpipelines-datascience-cloudcomputing-ai-devops-modelregistry-sagemakerendpoints-codepipeline-docker-autoscaling-cloudwatch#llmoptimization-rag-finetuning-promptengineering-aimodels-retrievalaugmentedgeneration-airesearch-campus360-openai-langchain-supabase-vectorembeddings#data-science#artificial-intelligence#devops

Responses

No responses yet.