Feed

Discussion

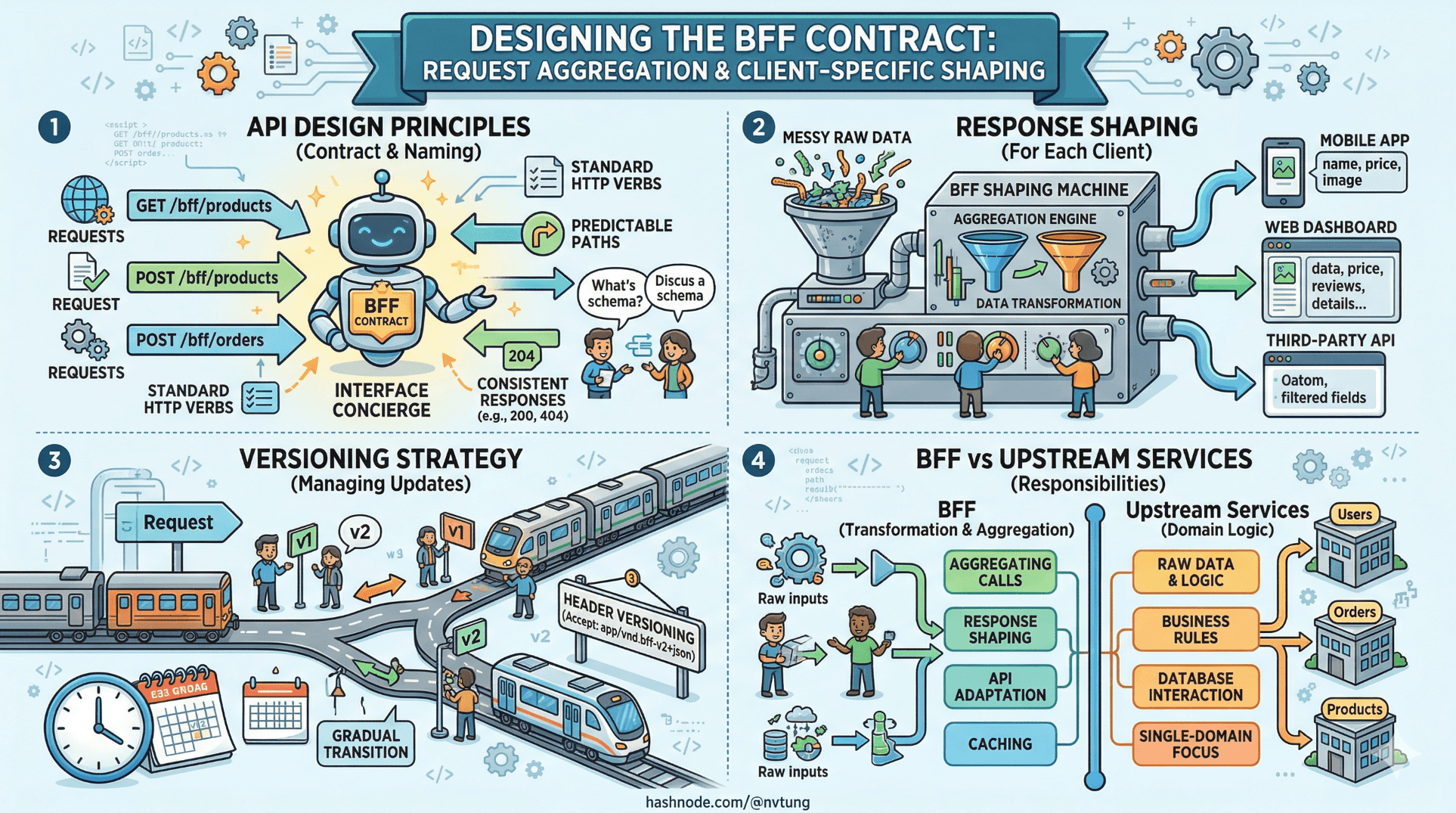

Designing the BFF Contract: Request Aggregation & Client-Specific Shaping

In the previous article, we established when a BFF earns its overhead. This article assumes you have made that decision and are now facing the harder question: how do you actually design the thing wel

devpath-traveler.nguyenviettung.id.vn15 min read

#bff#backend-for-frontend#api-design#versioning#api-contract#frontendarchitecture#vuejs#request-batching#caching#microservices#composition#backend-orchestration#payload-design#contract-testing#performance

This is exactly where most backend complexity should be handled today. A well-designed BFF (Backend-for-Frontend) contract isn’t just about aggregating requests—it’s about intelligently shaping data per client so each frontend gets only what it needs, nothing more. That means reducing over-fetching, decoupling UI changes from core services, and optimizing latency by parallelizing downstream calls. The real challenge is keeping the contract stable while allowing client-specific flexibility without turning the BFF into a monolith. When done right, it becomes a thin but powerful orchestration layer that dramatically improves frontend velocity and system scalability.