1d ago · 4 min read · Every low-code platform looks great in the demo. Drag, drop, ship — 50 records fly. Then it hits a real tenant with a few million rows and real concurrency, and the list view takes 12 seconds, the det

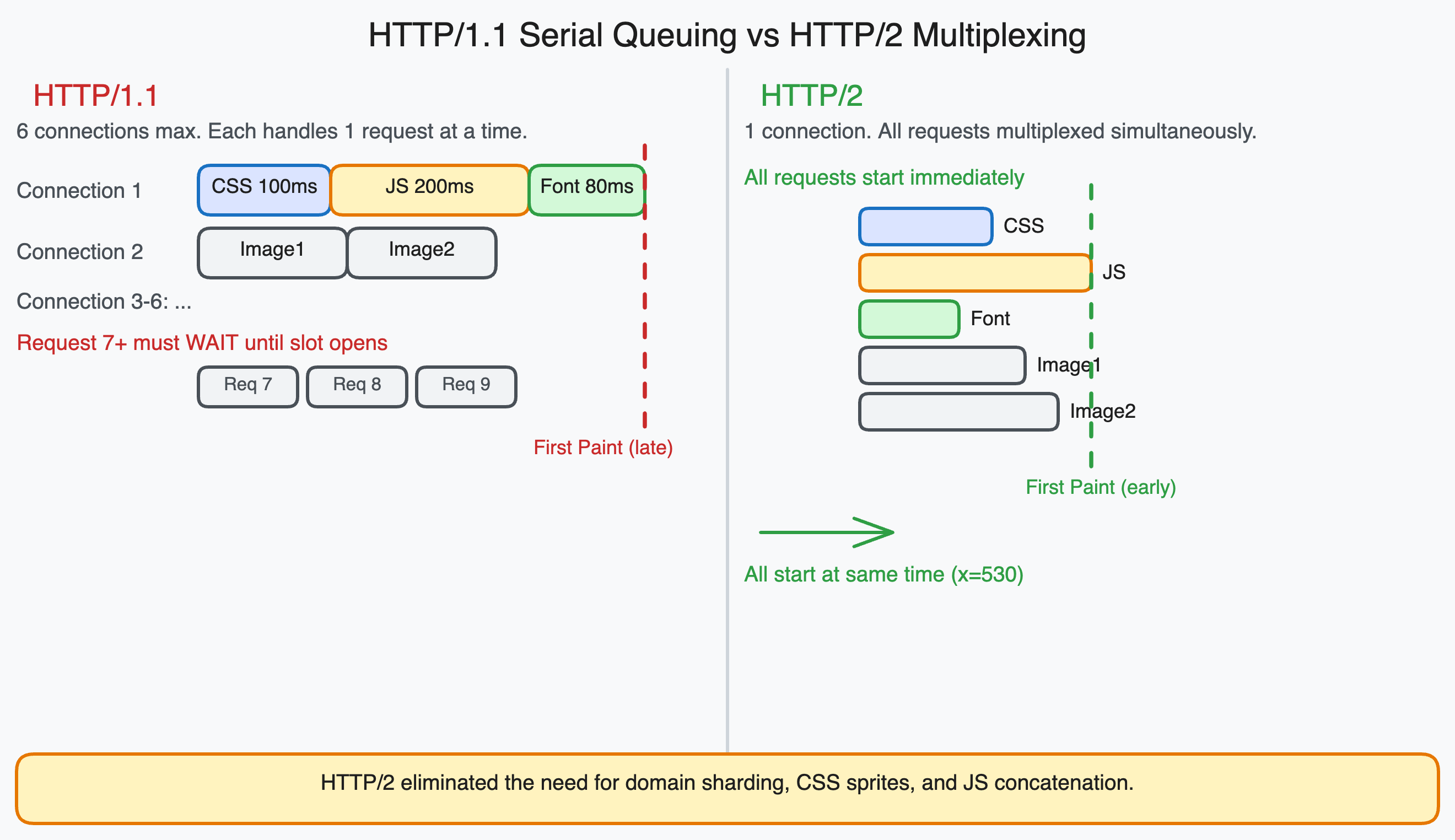

Join discussion1d ago · 9 min read · Related: Network Optimization for SPAs and React Apps covers the modern optimization techniques that work with HTTP/2 rather than around HTTP/1.1's limitations. In 2012, a frontend developer optimizin

Join discussion

1d ago · 8 min read · What is a memory leak A memory leak happens when your application holds references to objects it no longer needs. The garbage collector cannot free them — because from its perspective, they are still

Join discussion

1d ago · 6 min read · The Latency Tax: Quantifying the Engineering and Financial Costs of Web Latency Modern stakeholders often ask, “Does an extra 400ms of latency really matter?” The short answer: yes—for many customer-f

Join discussion2d ago · 6 min read · Most gamers tweak their GPU settings and internet plan but ignore the one thing that directly affects server connection time - their DNS. Every time you join a match, your device sends a DNS query to

Join discussion