Apr 7 · 12 min read · LLM Memory Evaluation: Benchmarking Agent Recall and Retention LLM memory evaluation systematically assesses how well large language models store, retrieve, and use information over time. It quantifies an AI agent's capacity to retain relevant contex...

Join discussionJan 16 · 3 min read · Context This was not a theoretical exercise.I was preparing for a real job interview with real expectations and a limited time. The interview required two concrete deliverables: A solution architecture document An executive-level interview presenta...

Join discussion

Sep 19, 2025 · 6 min read · When we started rolling out voice and chat agents at Hillflare, it felt like opening a thousand tabs at once. Every new client, every script, every accent, every “quick tweak” to a prompt multiplied the ways things could go right… or sideways. Readin...

Join discussion

Nov 22, 2024 · 5 min read · Arxiv: https://arxiv.org/abs/2411.01322v1 PDF: https://arxiv.org/pdf/2411.01322v1.pdf Authors: Jeffrey N. Chiang, John Lee, Simon A. Lee Published: 2024-11-02 What Does the FEET Paper Claim? At the heart of the paper "FEET: A Framework For Evaluatin...

Join discussion

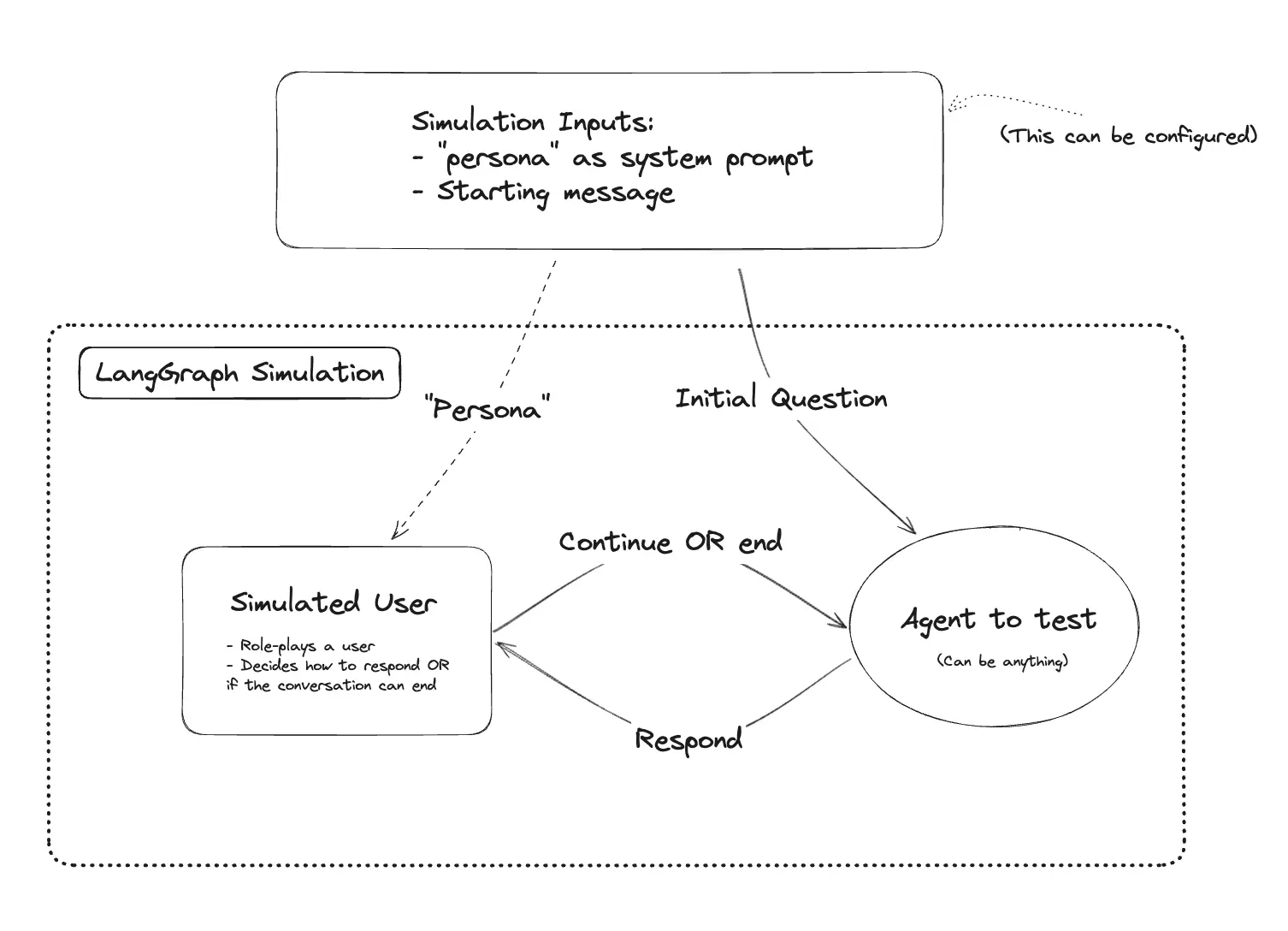

Oct 16, 2024 · 4 min read · When building a chatbot agent, it's important to evaluate its performance and user satisfaction. One effective method is user simulation, which involves creating virtual users to interact with the chatbot and assess its responses. This approach allow...

Join discussion