Apr 17 · 12 min read · Two years ago, fine-tuning a large language model required a rack of A100s, a machine learning team, and a five-figure cloud bill. In 2026, a single RTX 4070 Ti is enough to specialize a 7B model on your domain data — in an afternoon. That shift happ...

EAli commented

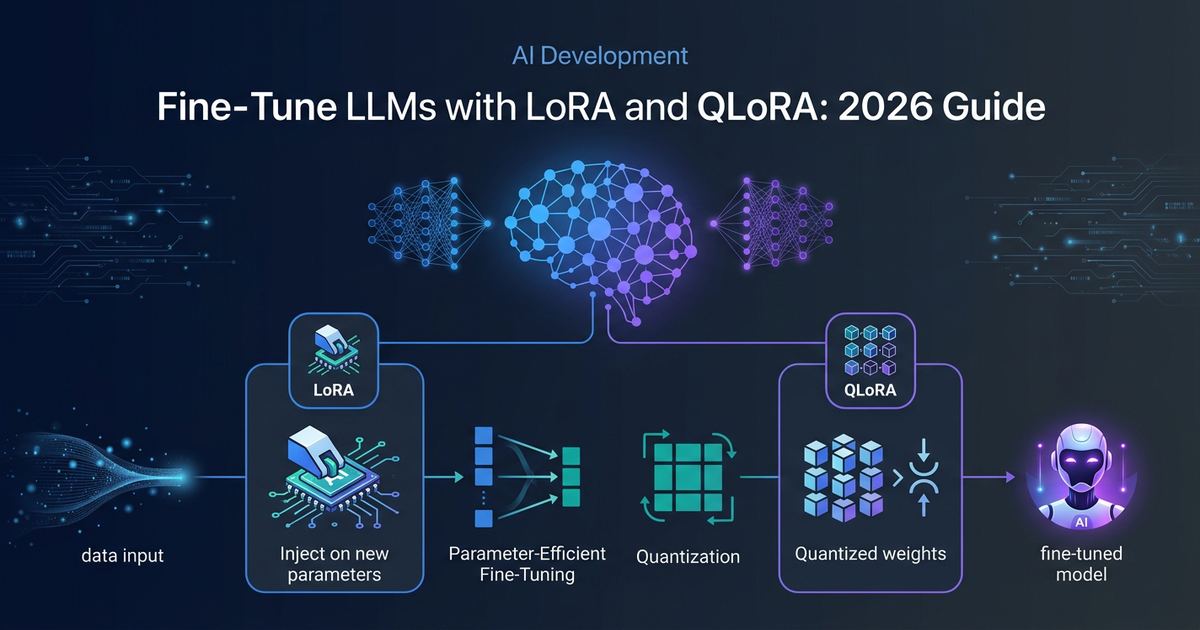

Mar 9 · 14 min read · TLDR: Full fine-tuning updates every model weight, which is expensive in memory, compute, and storage. PEFT methods update only a small trainable slice. LoRA learns low-rank adapters on top of frozen

Join discussionAug 20, 2024 · 5 min read · Les techniques d'apprentissage efficace permettent d'adapter rapidement et économiquement les grands modèles de langage (LLMs) à des tâches spécifiques. Grâce à des approches comme LoRA, qLoRA et PEFT, il est possible de personnaliser ces modèles san...

Join discussion

Oct 16, 2023 · 9 min read · Overview In this blog post, we have explored the concept of tuning Large Language Models (LLMs) and their significance, in the field of Natural Language Processing (NLP). We have discussed why fine-tuning is crucial explaining how it allows us to ada...

Join discussion