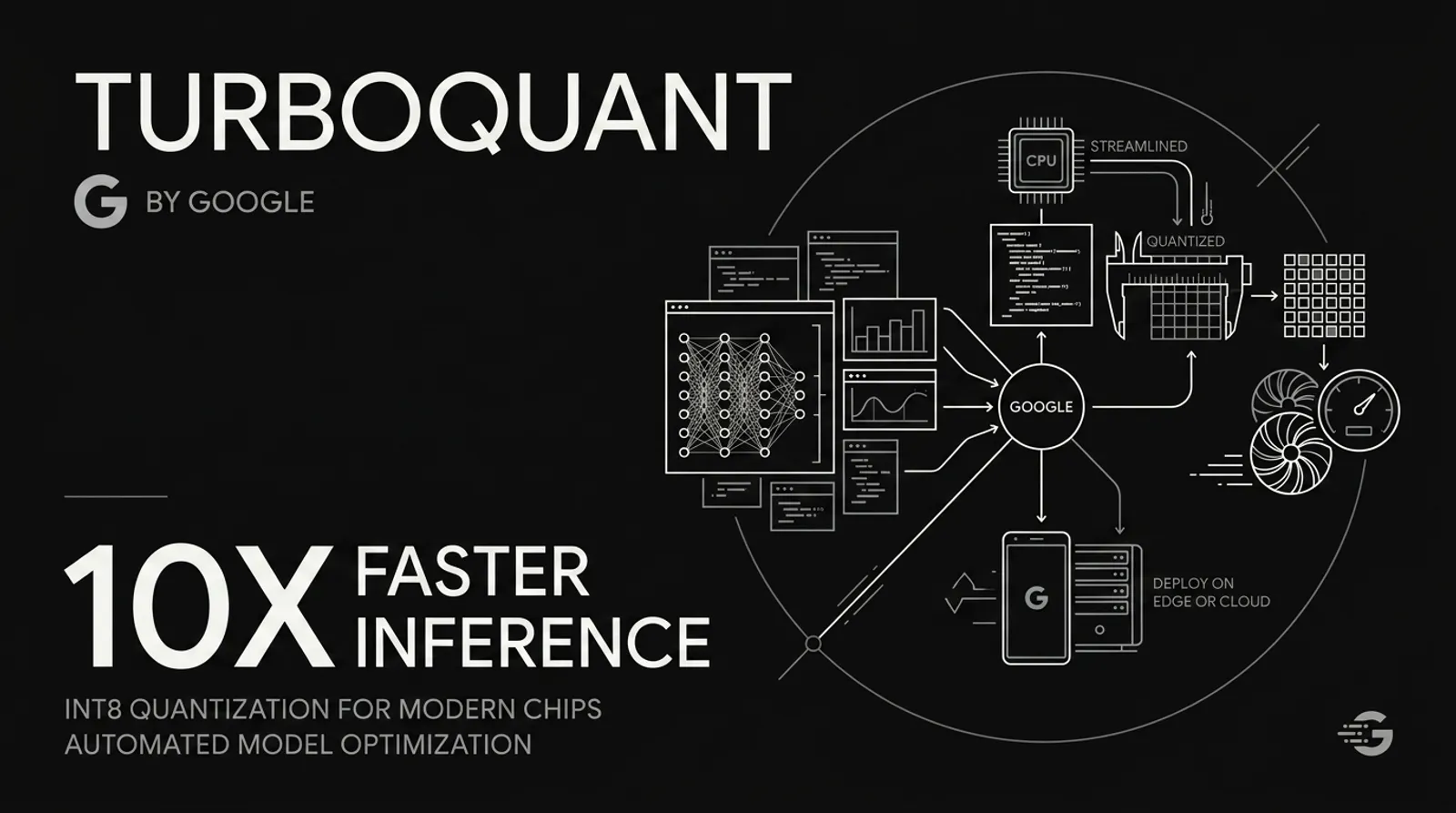

Google TurboQuant: 6x KV Cache Compression Changes AI Inference Economics

May 2 · 10 min read · The key-value cache is the most expensive part of running a large language model — and until now, nobody had solved it without sacrificing accuracy. At ICLR 2026, Google Research published TurboQuant: a two-stage compression algorithm that reduces KV...

Join discussion