May 16 · 13 min read · A hands on guide for AI engineers who care about embedding compression, retrieval latency, and not having to rebuild their index every time the data shifts. If you build retrieval augmented generatio

Join discussionMay 8 · 7 min read · Photo by Brett Sayles on Pexels You're staring at your AI agent's lackluster responses, wondering why it keeps hallucinating facts about your company's products. The truth is, most developers jump straight into building agents without understanding t...

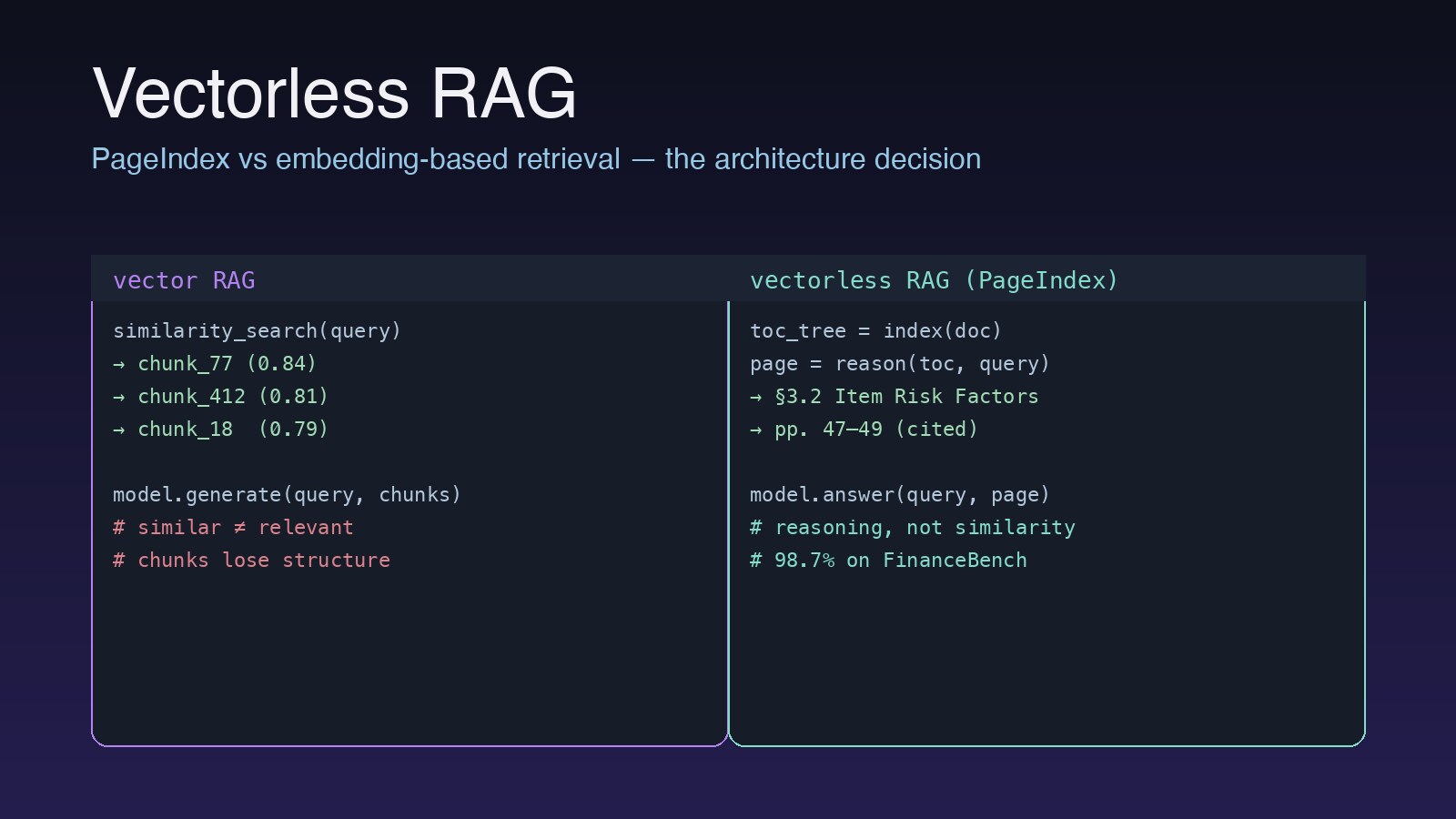

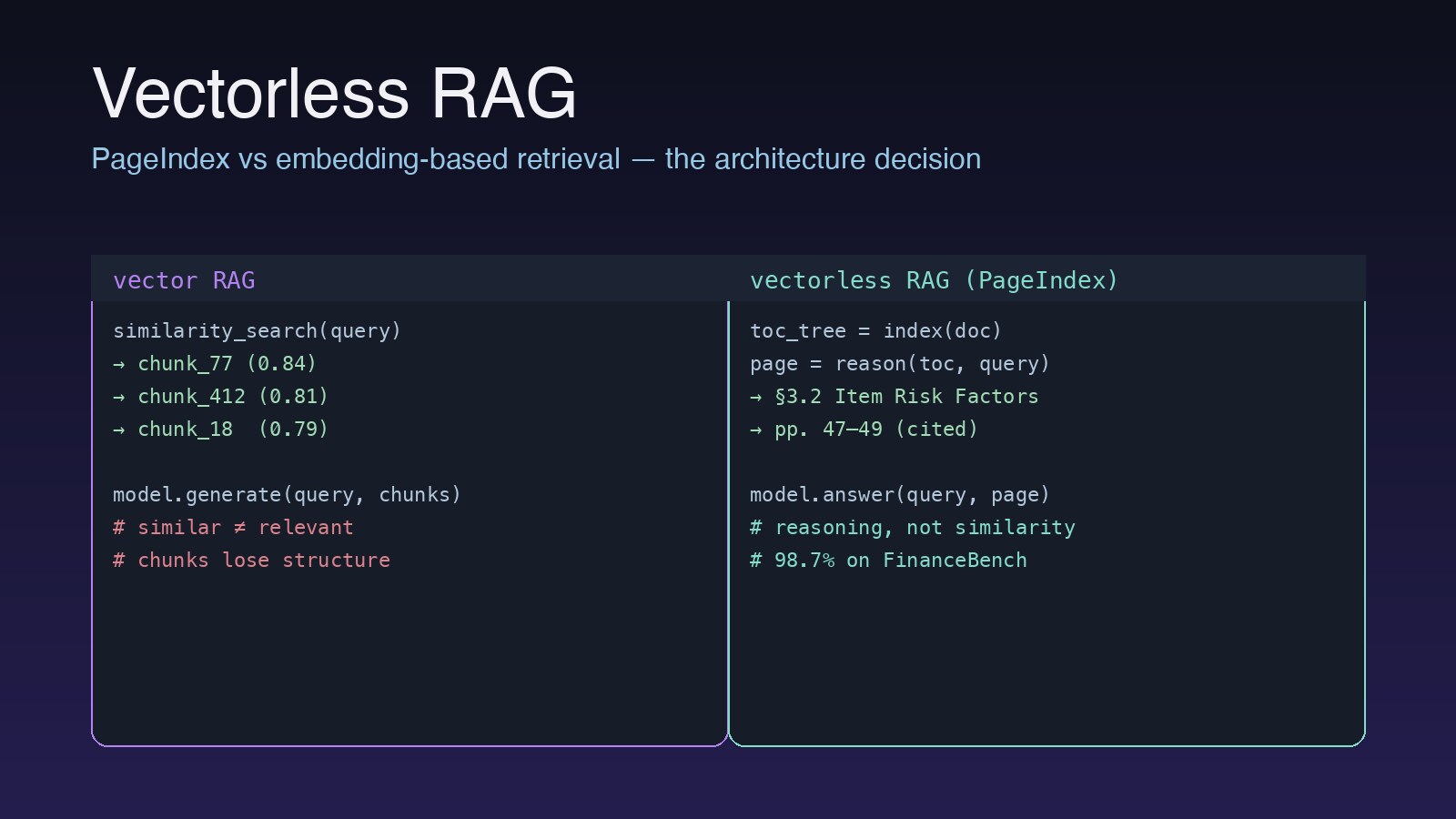

Join discussionMay 8 · 8 min read · On May 7, 2026, VectifyAI/PageIndex was the #4 trending repo on GitHub, picking up another 953 stars in a single day. The repository description is six words long and explains the entire bet: 📖 Read the full version with charts and embedded sources...

Join discussion

May 8 · 8 min read · On May 7, 2026, VectifyAI/PageIndex was the #4 trending repo on GitHub, picking up another 953 stars in a single day. The repository description is six words long and explains the entire bet: 📖 Read the full version with charts and embedded sources...

Join discussion

May 6 · 8 min read · In Postgres: -- CREATE EXTENSION IF NOT EXISTS vector; 2) Database schema (pgvector + metadata) ```sql -- Documents table CREATE TABLE IF NOT EXISTS documents ( doc_id TEXT PRIMARY KEY, source TEXT, title TEXT, created_at TIMESTAMPTZ DEFAULT...

Join discussion

May 2 · 4 min read · If you build AI applications, you need to understand embeddings. They power semantic search, RAG systems, recommendation engines, clustering, anomaly detection, and dozens of other applications. Yet most developers treat them as a black box. Let's op...

Join discussionMay 2 · 6 min read · You've probably had this experience: you ask ChatGPT about your company's policies, and it confidently makes something up. That's because LLMs only know what they were trained on — they don't know about your documents, your data, or your business. RA...

Join discussionApr 22 · 10 min read · Embeddings Explained: How AI Turns Words Into Numbers That Actually Mean Something The surprisingly elegant math that lets computers understand that "dog" and "puppy" are related — and why this powers everything from ChatGPT to your Netflix recommend...

Join discussion