Apr 27 · 7 min read · You're a web developer, constantly pushing the boundaries of what's possible in the browser and on the server. For years, integrating sophisticated Artificial Intelligence into web applications often meant hefty cloud bills, data privacy concerns, or...

Join discussion

Apr 16 · 7 min read · You've got a 1.7 billion parameter model. You want it running locally. In a browser tab. No server, no API keys, no Docker containers. Sounds impossible, right? A few months ago, I would've agreed with you. But 1-bit quantized models like Bonsai 1.7B...

Join discussion

Apr 6 · 6 min read · Four days ago, Google released Gemma 4 under Apache 2.0. The headline models are the 31B dense and 26B MoE variants that compete with Llama on Arena AI. But the models I've been running nonstop since release are the ones nobody is talking about: Gemm...

Join discussion

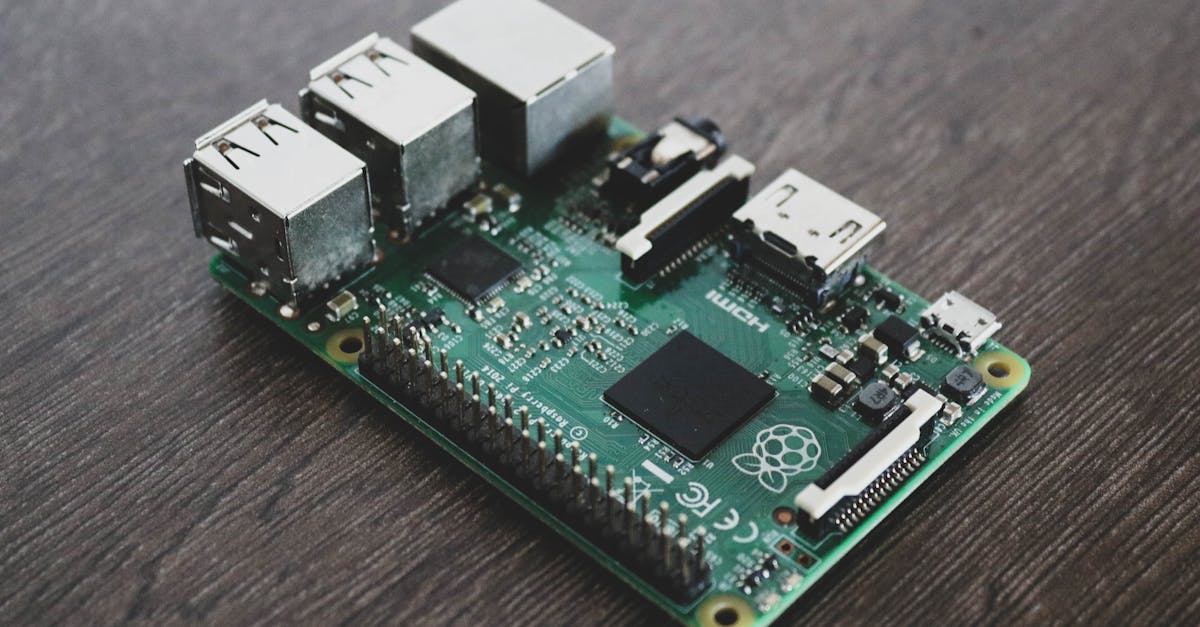

Mar 25 · 2 min read · The Problem I Want to Solve Recruiters pay $0.05-$0.20 per document to cloud parsing services like DaXtra, RChilli, and Affinda. Beyond cost, there's a privacy issue: candidate data leaves the device and travels to vendor servers. I want to explore w...

Join discussionFeb 13 · 5 min read · No external software, no cloud, no complexity—just install and start chatting. TL;DR: I built a Chrome extension that runs Llama, DeepSeek, Qwen, and other LLMs entirely in-browser. No server, no Ollama, no API keys. Here's the full story—why I built...

Join discussion