Fleet 1.0: Finding the One Slow Rank in a 64-GPU Job From the Cluster Side

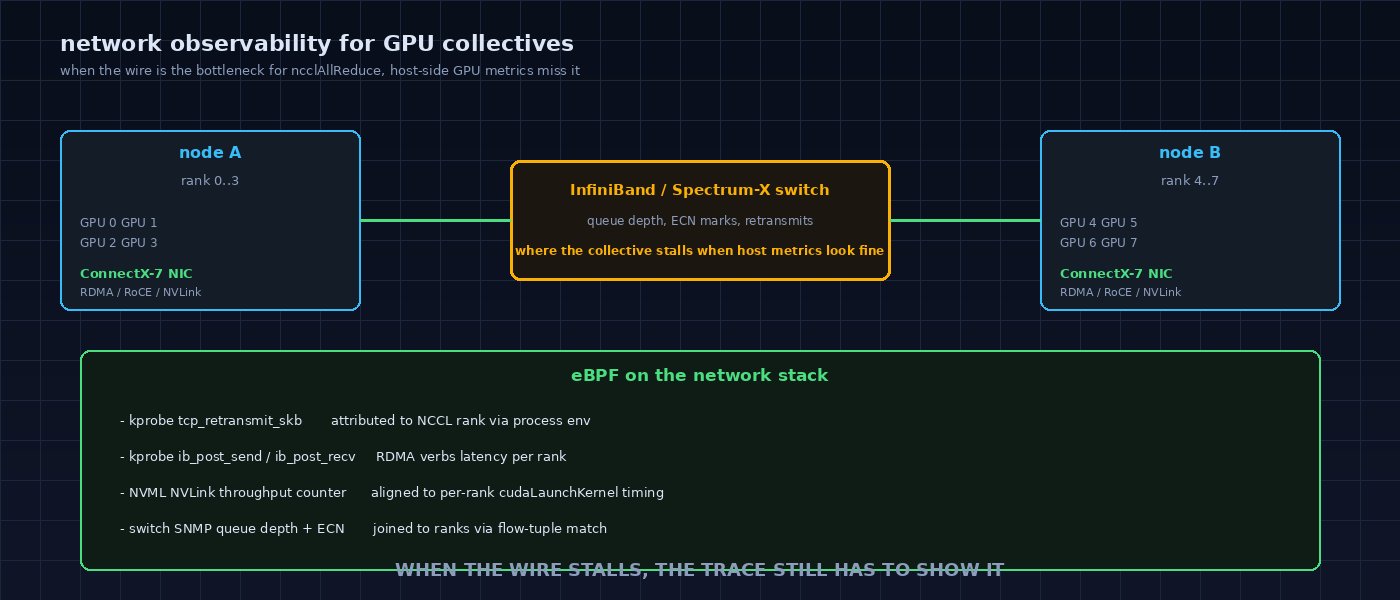

1d ago · 7 min read · TL;DR In a distributed training job, every node can look healthy on its own dashboard while throughput across the job quietly drops. The cause is almost never visible per host, because the signal is r

Join discussion