I Tested Flowise, Dify, and n8n Across 30+ Client Deployments. Here Is My Verdict.

Apr 7 · 18 min read · Three clients came to me last month with almost the same question. One wanted a customer support chatbot trained on 800 pages of product docs. One was building an internal knowledge tool for a 40-person team. The third needed a fully automated lead q...

Join discussion

Chatbot:Run flowise and ollama locally

Dec 4, 2024 · 1 min read · Prerequisites Server: ollama run llama3.2 ollama run nomic-embed-text:la Install Flowise locally using NPM: npm install -g flowise Start Flowise: npx flowise start if successful: Steps: 1 . Create documents stores 2. Click Document store an...

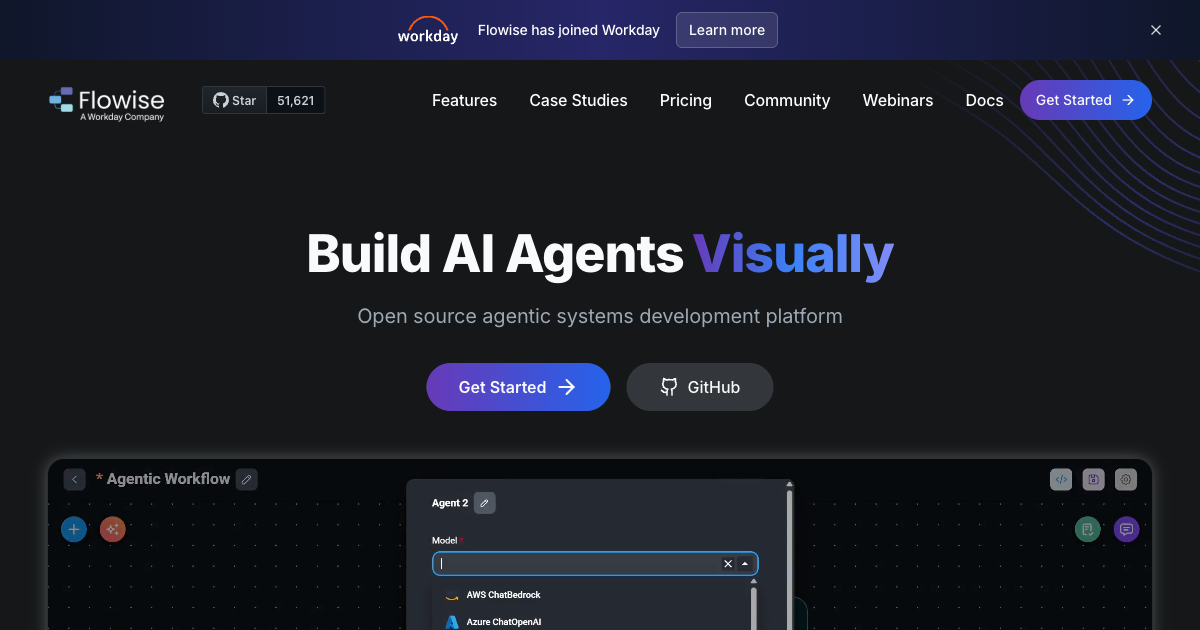

Join discussionUnlocking the Potential of AI with Flowise

Nov 16, 2024 · 3 min read · In the rapidly evolving world of AI, developers are constantly seeking tools that streamline the process of building and deploying applications. Enter Flowise, an open-source, low-code platform designed to simplify the creation of customized LLM (Lar...

Join discussion

Using Flowise with Local LLMs.

May 12, 2024 · 7 min read · TL;DR. Using Flowise, with local LLMs like Ollama, allows for the creation of cost-effective, secure, and highly customizable AI-powered applications. Flowise provides a versatile environment that supports the integration of various tools and compone...

Join discussion

Installing Langflow and Flowise.

May 6, 2024 · 9 min read · TL;DR. Installing Langflow and Flowise involves setting up an environment using Miniconda, installing Node.js via NVM, and creating the necessary directories and scripts. Langflow, a user-friendly interface for LangChain, allows easy AI application c...

Join discussion